Vision-based Robotic Citrus Harvesting

Introduction

Mechanization of harvesting of fruits is highly desirable in developing countries due to decrease in seasonal labor availability and increasing economic pressures. Automated robotic harvesting systems are preferred over the mechanical harvesting systems because of a superior harvest fruit quality. Vision-based autonomous robotic citrus harvesting requires target detection and interpretation of 3-dimensional (3D) Euclidean position of the target through 2D images. A new strategy is required for the unstructured citrus harvesting application where the camera can not be a priori positioned to the desired position/orientation for the traditional visual servo control, which directed to the development of a 3D target reconstruction based visual servo control. The 3D reconstruction is achieved by using the target statistical data along with the target image size and the camera focal length to generate the 3D depth information. The controller achieves exponential stability thus regulating the robot arm at the center of the target fruit. The experiments are conducted which demonstrate the feasibility of the controller for an unstructured citrus harvesting application

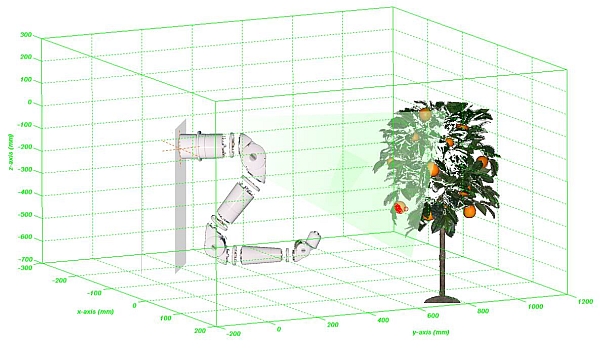

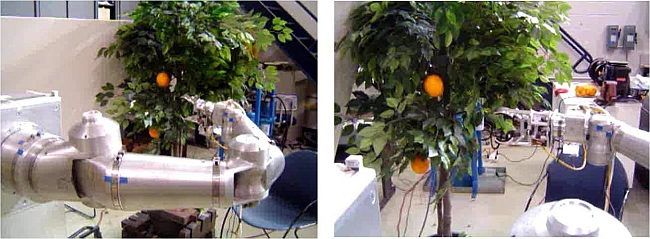

Experimental Setup

- The hardware setup consists of (1) robotic manipulator; (2) end-effector; (3) robot servo controller; (4) image processing workstation; and (5) network communication.

- Robotic manipulator Robotics Research K-1207i, a 7-axis, kinematically-redundant manipulator.

- End-effector a 3-link electrically actuated gripper mechanism developed by S. Flood at the University of Florida.

- Robot servo control unit consists of INtime real-time component (R2 RTC) and the NT client-server upper control level component.

- Image processing workstation runs citrus detection, feature point identification & tracking and TBZ control algorithms developed in Visual C++.

- Network communication INtime based real-time communication has been established between robot servo control workstation and image processing workstation.

Experimental Results

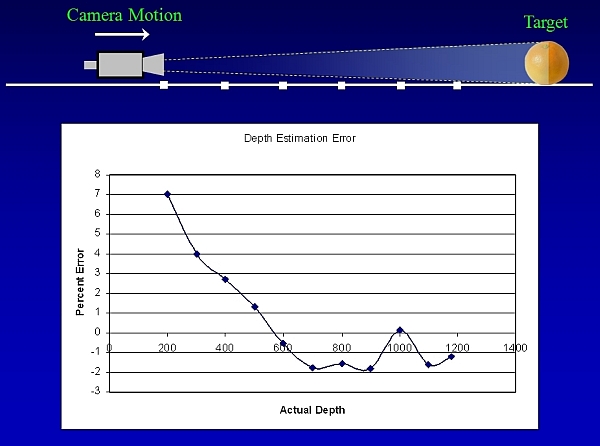

Performance validation of 3D depth estimation a preliminary experiment

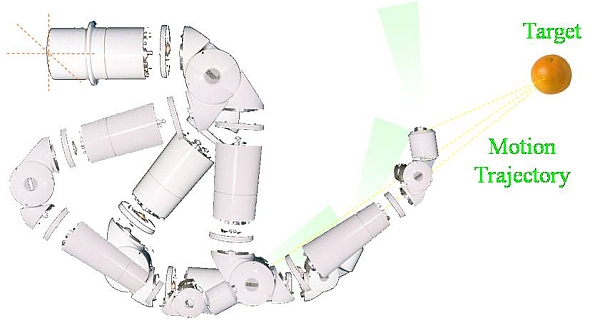

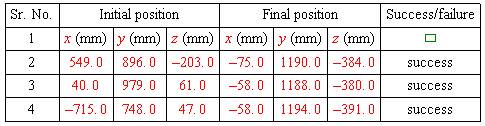

- Experiment I a repeatability test

Euclidean position and orientation of end-effector (at the beginning of trajectory) and target is constant for all the iterations.

3D plot showing repeatability of the control scheme

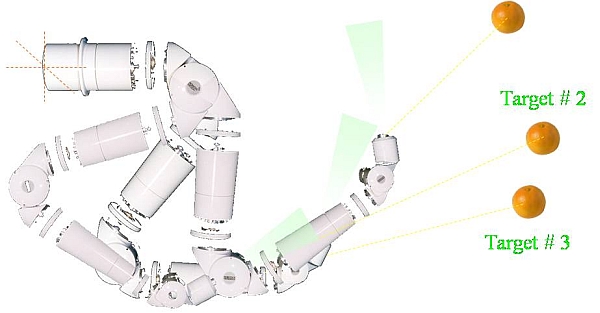

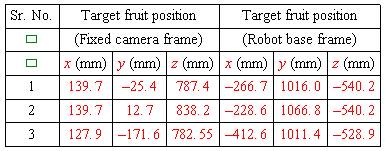

- Experiment II

- Euclidean position and orientation target is constant for all the iterations.

- Euclidean position of the end-effector is varied for each iteration.

- Multiple targets in the field-of-view of camera in-hand.

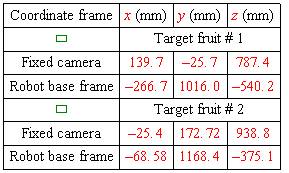

Actual Target Position

Robot end-effector position at the end of motion trajectory

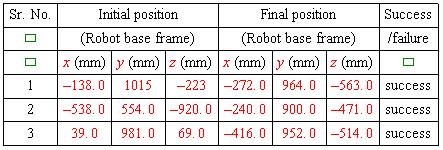

- Experiment III

- Euclidean position and orientation target and end-effector (at the beginning of motion trajectory) is varied.

Actual Target Position

Robot end-effector position at the end of motion trajectory

- Experimental Demonstration

Publications

S. S. Mehta, T. Burks, W.E. Dixon, Vision-Based Localization of a Wheeled Mobile Robot for Greenhouse Applications: A Daisy-Chaining Approach, Transactions on Computers and Electronics in Agriculture, pp. 28-37, vol. 63, 2008.